Imagine it’s Sunday morning and you’re casually checking out your Google ranking by typing in your domain name in Google, only to discover that your website has thousands of strange, Japanese pages and your money-making pages are nowhere to be found on Google.

This is exactly what happened today to a client. Their new website that we just optimized got infected with an annoying malware that rewrote their entire Sitemap, submitted it to Google for indexing, and displaced their existing pages. This caused an immediate drop in ranking, and lower ranking inevitably means loss of sales.

Unfortunately, there are skilled folks out there who will want to hack their way to money by stealing other websites’ traffic. By ruining someone else’s Sunday, these folks want to pretend they are doing good SEO service to their clients, uninterested to know that stolen traffic doesn’t generate sales. It just robs one website of their hard-earned traffic, ruins the dreams of an unsuspecting yet paying client, and upsets thousands of web developers across the world who are hired to clean up the damage. In such a scenario, everybody loses. Except for the bad guy. They get to brag about how much traffic they brought to their client.

As you might have guessed, my Sunday was ruined by such a person, and I’d like to share this experience, hoping to help at least a handful of business owners to avoid ruined Sundays as much as possible. This happened on an ecommerce website that was not properly maintained for years, and it infected all other websites on the server, causing measurable damage for the owner of the online shop.

Here’s how the infection happened, how it spread, what sort of damage it did, and how we cleaned up the mess. But before all that, a bit of history, and a bit of info on what we were hired to do.

“I got a friend who has a slow site, can you click around to make it work faster without a rebuild?”

We were recommended to this client by another client of ours, who was keeping an eye on my social activity around the topic of SEO. He has an eCommerce business with over 2000 products, and needed a reliable agency to take his SEO and PPC beyond the level of freelancers. We talked, and 2 months after, he was so pleased that he started referring us contacts for just about anything-digital.

This is how we got in touch with the client/project I want to talk about. They have an eCommerce website with some 5000 products, but since it was multilingual (WPML), it had 10.000+ of them. The client used a custom order processing/CRM system that relied on a customized email trigger that was sending out a formatted data stream (Woo email notificaitons) to their custom CRM. There was even a plugin to load a different logo on a mobile device. It was THE messiest build we’ve ever seen. About 100 active plugins and a homepage that was triggering Woocommerce products even for generating the menu (UberMenu). There was no revision control, no image optimization, and no speed setup of any sort. As there were no license renewals, the entire WOO stack was outdated and stuck in 2017. It is one of the most creative ways of adding as many plugins as possible an having a reason for it…. even though most of them could easily be dumped and replaced with media queries and adding a few lines in the child theme’s Functions.php.

This sort of a messy build with un-updated software clearly meant that the site is ridiculously slow, impossible to add new features, and risky to update. There were some 80 DB Slow Queries (Query Manager) that made the site to take about 20 seconds to load, and the server was regularly crashing with 500 Errors.

We were hired to make the site work as fast as it can, without doing a full rebuild. This meant:

- cleaning out the multilingual support, which they didn’t need anymore, dropping the product count to about 5000

- cleaning out old products they didn’t sell anymore, further reducing the product count to 3042 products,

- post revisions to reduce the database size from some 3GB down to 900MB

- database repair and optimization (PhpMyAdmin)

- removing all unused images+thumbnails from old posts/products

- image optimization of the ones that are still in use (SmushIt)

- introducing the miracle of CSS media queries instead of a dozen plugin-based mobile responsiveness hacks

- removing duplicate plugins and adding functions.php and native Woo features to get the “customization” provided via some 20+ plugins

- updating the remaining 50 plugins, the theme and WP core

- running a full site crawl to discover any 404s

- comparing old vs new sitemap to verify all’s good (screaming frog and custom excel worksheets)

- thumbnail regeneration (because automated unused image removal is a nuke strategy, not a precision strike, so collateral damage is inevitable)

- wp-based speed-up with gzip, css/js cleanup, html cleanup, pushing some imagery to wp.com (jetpack)

- cloudflare-based caching to further speed things up and relax our server (VPS Fast Comet)

- post-build speed verification to make sure we didn’t miss anything (Pingdom, GTMetrics)

Here’s a screenshot of my trusty Pingdom tool to measure speed and find bottlenecks. As we can’t alter the design of the website, and we can’t eliminate the excessive use of images that are queried from woocommerce products and categories, it’s still a ridiculously massive website with some 160 HTML calls and about 9MB footprint of the homepage. Still, for such a large page, the load speed got a serious boost:

You’ll notice that the client website is pinged from Germany. This is because the Frankfurt testing server is geographically closest to the target audience of the website. BTW, if it wasn’t for Google Translate auto-localizing pages in Chrome, we’d have no idea what we’re looking at.

I’ll probably (eventually) write up a better report for this optimization project as we achieved spectacular results. We eliminated all the slow queries with proper database optimization procedures (way beyond plugins ability), we crunched the data footprint of the site from some 30GB down to about 15GB, and we got the site loading from 20 seconds down to about 5 seconds. Then, it came down to flipping the switch on the old site, and launch the optimized version.

How did the infection spread across the server?

As we were working on the new version onto a new VPS on Fast Comet owned by the client (instead of the previous setup where the dev company owned the hosting), we had full control and had the entire server for the needs of the website. We set up the production instance of the site which was set on the root of the server and the main domain was obviously tied to this WP instance. Another instance was for the Staging environment where we had a copy of the Production version. We use this version to test updates and regular product-related data imports before deploying these to the live site. Then, there’s a third instance required by the clients, that we get the existing/old website onto the new server environment just in case if the new/optimized version were to crash. The client is making about 500 sales per day, and each order is a 3-figure deal at least, so it’s a lot of money on the line and we can’t afford to have down-times.

When the time came to flip the switch on the new server, all it took was to set up a new Cloudflare account (it imported all the settings from the old Cloudflare account, MX records included), edit the A-Record with the new server’s IP, and update the DNS settings in the domain registrar. We already had the other subdomains all ready to welcome the new root domain, and in about 6 hours the DNS edits finally propagated globally. We started the migration at the end-users “midnight” so the client would not lose any sales as they were serving a local market.

What we didn’t realize at the time is that the old site was infected with malware. As we wrapped things up in the morning, within the first few minutes of public life of our version of the site, we already had orders coming in. Not having slept through the night as bizarre server outages started popping up on every step (turned out there’s a server misconfiguration that Fast Comet missed for some reason), we finally did the last ping to test the speed and established that the site is humming along at an average 3.3 seconds load time and clients are able to buy.

Then…disaster.

The site again had slow queries popping up, it became unstable, and around noon, it crashed. With all the optimization, all the tweaks, all the caching setup, for a strange reason, the site went unresponsive and then offline.

We quickly realized that the index.php file is corrupted. We replaced the corrupt index.php file with the clean/default WP file, and the site sprang back up, healthy and fast as ever. Further inspecting the situation (using Activity Log and server log files), we established that there were no human errors of causing the infection, nor was there any unusual server log activity that could cause the infection. Then it dawned on us that, on a clean server environment and a refurbished site that was stable for over a month, it must have been something we uploaded just moments before flipping the switch. And that something ended up being the old/original site of the client.

Scanning the site for malware found that their index.php file is also infected, with a much older Last Edited timestamp than the index file on our version, and we had another 70+ infected files in the WP Includes folder. Since we didn’t need the old version of the site, we killed the subdomain and that got rid of the infection. For the time being.

What were the consequences of the infection?

The malware infection had a very weird outcome. It had a built-in delay of 3.2 seconds and a sitemap injection/cloaking of a Japanese eCommerce website. What resulted from this infection is that the website’s Sitemap.xml file was completely rewritten with our domain name, which was feeding data to this Japanese e-commerce website, loading their product as if they were created on our website. Clearly, there were tons of links back to the Japanese site, and all the imagery was loaded from this site. These external links were then populated in a new sitemap.xml file, and the site would then ping Google with the sitemap url as a force-reindex hack. Since the infection completely rewrote the original sitemap.xml, Google Console would get an entirely new set of URLs to index. Meaning, if undetected, the infection would ruin the organic traffic for this site, resulting in thousands of sales, and perhaps millions of dollars of lost revenue.

The screenshot above is this bogus sitemap.xml file that was sent to Google Console for force-indexing. All 2000 of them. Clearly this XML sitemap is odd-looking, at least for a WordPress website. But, in all honesty, how many of us ever wander off to check out the Sitemap XML url, right? This is why it’s good to have a weekly checklist and to check critical files, including the robots.txt file.

This is a screenshot of one of these pages. Again, thanks to Google Translate we can figure out what this is. Their policy seems as sketchy as their marketing, it seems:

The infection bloated our Console index by an additional 2000 URLs. These URLs are all fed from another location, meaning you won’t find them if you search your site. This is a cloaking technique used by hackers to mask the actual origin and make the pages appear as if they’re coming from your website. This is why it’s important for your SEO department and webdev department to talk to each other. Otherwise, these kinds of infections would be messy to resolve. But I’ll get to that later.

Who was behind this infection?

Besides an unsuspecting client who hired a morally sketchy marketing agency from a large country in the far-east, other helpers in this infections were a poorly maintained website, and an unsuspecting webdev company who assumed the live site is safe to import a clear server environment.

That being said, the author of the infection is written in the infection itself. The PHP script called on an external server with a request to get 2000 URL entries in the hacked sitemap.xml file. This external server therefore clearly packed and responded with a data package containing URLs to be cloaked. There’s a username and a password for the API login, so the author-company clearly has more than one client to build links and pull “organic” traffic for their unsuspecting clients.

The code snippet up here is a screenshot of an otherwise elaborate code that gets the URLs, creates a new sitemap, pings Google with this new/dummy sitemap, and even puts it neatly in the robots.txt file for good measure. If unsuspecting website owners wouldn’t check their Sitemap and Robots.txt files, they would be losing traffic without knowing WHY this is happening.

The only working URL that did something is the highlighted one. Typing it in redirects to an Indian web development/marketing agency who has marketing packages for eCommerce websites including email marketing. While I am not 100% convinced that this is the company behind the infections, it is a smoking gun over agencies where you buy a service as if it’s a product. Where you’re misled to believe that the deliverables you should expect are digital activities instead of business growth. Movement is not progress. Running in circles is movement, but it’s not getting you anywhere. It seems that whoever is making money using these dirty tricks (clearly not the website owner who expects sales from this stolen traffic) isn’t overly concerned with the fact that they are killing someone’s dream, and sinking someone’s reality. In the grand scheme of things, such agencies are twice thieves. Once a thief for stealing the dream from their unsuspecting client. The second time they’re thieves is when they ruin the businesses of unsuspecting website owners who got this malware on their server.

How to do a quick-fix?

Scanning the site for malware (gotmls) immediately triggered the alarm as the index.php file in the root folder of the domain was infected. Then there were another 70 files that were infected. Gotmls cleaned up the infections, but the index.php file got corrupted and the website went completely down with a 500 Error response. It was clear that this error is not really a server error but a website misconfiguration.

These almost blank-screen 500 Errors are either caused by a misconfigured htaccess file or a bad index.php file. The htaccess got replaced with a default WP one, and we did the same for the index.php file. This got the site back up in no time.

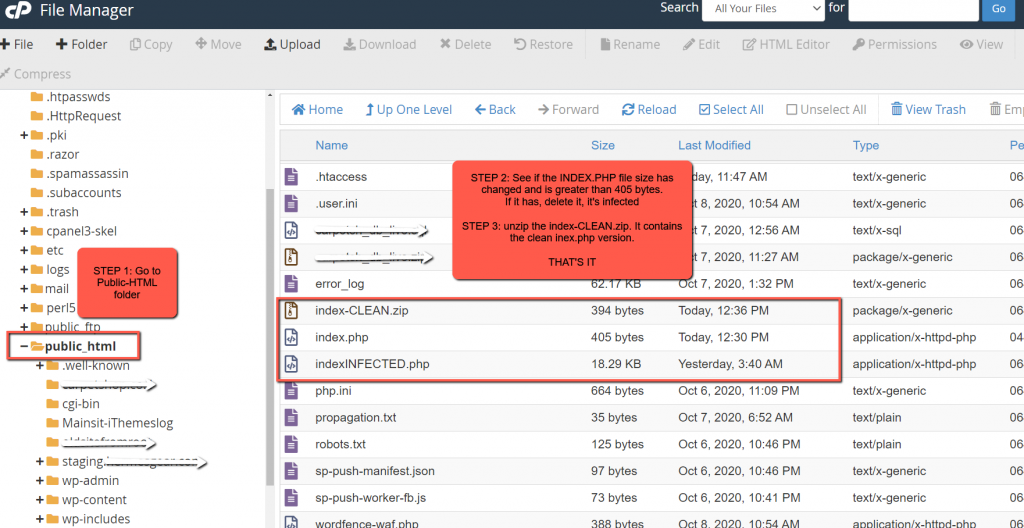

Once malware removal was done and the new clean htaccess and index.php, the infection popped back up a few times but this time only on the index.php file. At this time, we involved the hosting provider too and requested a server-side malware scan. Fast Comet responded quickly and initiated a server-wide scan. In the meantime, the quick and easy hack to prevent rewrites of the index.php file was to have a clean index file on the server that we can easily unzip to the Root, and overwrite the existing/infected index file.

As you can see from the image above, I keep the zipped file handy, but I left the infected file too, renamed of course with no folder execution security setup so the file won’t fire up even if someone can trigger the URL.

[Extra Update Dec. 17th 2020]

It turned out that disabling and deleting the File Manager plugin that opened the floodgates in the first place, is not a clean deletion. There’s a file in the wp-admin/users called kpi.php. This file is neatly copied not only here, but in other locations on the server. The file is an open door for hackers to gain access to the server and inject new code, that practially reinfects the site with the same problem all over again.

The quick and easy way to get rid of this file, and the wp-config-samples.php is to go to cPanel-File Manager, and run a Search on the entire server for these two files. I found kpi.php in 3 locations, and wp-config-samples.php file in 2 locations. Deleting these two files should be part of the cleanup procedure. Otherwise, you end up back at square one. The site is still voulnerable and it’s just a matter of time before it gets reinfected again.

Google Console Cleanup

Next in line is the Google Console cleanup. As this malware dumped the old/existing sitemap and pinged Google with an entirely irrelevant sitemap with 2000 cloaked links, the ranking will of pages for a specific search terms can drop. So it is extremely important to clean up the old URLs from Google’s index and only then re-submit a clean sitemap.

Cleaning up the bad links is fairly simple. Since you already have them all in the sitemap.xml feed, all you need to do is copy the enitire content of the page (simple drag-to-select will do) and paste them in Rob Hammond’s XML Link Extractor tool. I took the 2000 URLs, ran them through the xml extractor, and got a clean spreadsheet with links, timestamps and crawling importance. As you can’t copy a single table from an HTML table, I selected this table from Rob’s website, and pasted it in Google Sheets. Then it was a simple matter of selecting only the URLs column, and paste it in Notepad. This way, you can quickly get an upload-ready TXT file to submit it to Google Console’s URL Removal tool. There’s a Chrome addon that enables uploading a TXT file into the URL Removal Tool, which makes the entire process dead-simple.

Another option mentioned here is with a deprecated_sitemap.xml method, so that Google will know that all the links in that XML files are old, and should be removed. Basically, all you have to do is download the infected XML sitemap file, rename it, upload it to Google Console, and ping google with the URL, like so: google.com/ping?sitemap=mydomain.com/deprecated_sitemap.xml. Be careful to include http(s) in the URL or whatever the default domain is for your website. I haven’t found any documentation on the deprecated-sitemap idea, but the author of the blog post claims that submitting the Deprecated sitemap first, and then the clean sitemap again, removes the bad, now 404-ed URLs from Google’s index within a week or so.

How to prevent further malware infections?

A wise IT technician once said “only an offline computer is a secured computer”. The rest of them that are connected, the best we can do is close as many gates as possible and always have a disaster recovery strategy for solving the big problem first, and then dealing with the infection systematically. Just like the one I shared in the previous section. As far as a checklist goes… it’s long, so I won’t even attempt to be exhaustive here:

- Preventing infections like these starts with protecting key files. If you can look at the far-right side of the screenshot above, you’ll notice that I changed the server-side file permissions from 644 down to 444, so the index.php file won’t be editable. This way, it won’t be as easy to change the content of the file as it is when it’s a 644 level.

- Next, is to run regular scans with whatever plugin you like to use. I find GotMLS as a reliable plugin and it’s never let me down. It can reak havoc like in this case when it changes the content of an infected index.php file, but other than this incident, In over 10 years of work and countless of virus/malware cleanups we’ve actually never had a single hickup with gotmls.

- There’s also the inevitable tight setup of iThemes Security. Enforcing strong passwords, preventing folder listings, having brute force attack guards, etc. without taking too much time to set-up makes this file my favorite. I’ve had great experiences with other plugins, but iThemes seems to be my personal favorite.

- Keeping your WP core, themes and plugins up to date is perhaps one of the key steps in preventing security holes. The WP Security community is quite active, and there are new vulnerabilities discovered every week. With each new update, plugins, themes and WP itself address these issues and prevent hackers from exploiting code weaknesses.

- Password best practices should be a regular practice. Having one password to rule them all is not a good idea. Instead, using a password generator and keep login details different for each site is a prudent practice. There are password libraries from hacked accounts where dumb passwords that are easy for people to remember are the first bet for brute force attacks.

- Password resetting at specific intervals is also a good practice. It does create a bit of extra work every 60-90 days, but a few minutes of password-resetting and updating your Chrome saved passwords is nothing compared to the loss you could face from a hacked website.

- Own a reliable hosting account. Notice that I use the word “OWN” here. Your website is among your top business assets. Keeping it on a rented place on your developer’s server is not a good strategy. People argue all the time, and IF this were to happen, you want to be in full control of key assets like the hosting account where you host your website. Cheap hosting providers aren’t a good idea. Instead, go for a more up-market solution that has 24/7 support with either Chat or tickets, or preferably both.

- Be a good person to work with. Web developers, hosting providers, security consultants… we’re all human. And you as a website owner can only gain from befriending whoever is taking care of your website. Granted, some folks will be more useful to be around than others, but choose wisely and then be good to techies. It can be the difference between them jumping on a Sunday morning to fix things vs you getting an automated “Out of Office” email.

- Keep a close eye to your Google Console. Early detection is paramount in malware management. You will want to know as early as possible if something is wrong with your website, and Google is quite useful to show you any oddities like suddenly your homepage becoming “unindexable” or getting a huge spike of crawled URLs without you changing anything structurally on the website.

- Run regular Safe-Web scans of your website using the Sucuri scanner or Norton. There are others too, but these two first pop to mind. These tools will scan your site, and scan a central database of infected websites. IF your website has been placed in this infamous database, you can clean up the infection and submit a Reconsideration request basically, which will then result in removing the red-flag from your website. Otherwise, your visitors will get that default warning that Chrome throws out that the site is not safe.

Conclusion

Getting a project that wasn’t built right is always a hot potato. It usually is hotter than you think and ends up messier than you initially thought. Ask me how I know. Chances are, that if the site is using outdated plugins, theme and WP core, it’s probably infected with malware.

Sometimes, malware infections can bring down the site completely. Sometimes, they’ll just put a bitcoin miner. Sometimes they’ll inject redirects in your own pages so your visitors would be hijacked to another website. And, sometimes, your website’s sitemap.xml file will be rebuilt with a coaked set of 2000 URLs that will confuse Google Console, and will freak out the webdev team and the owner.

But, when this happens (no worries, it’s just a matter of time), the best thing you can do is keep a cool head (yeah, it’s hard, ask me how I know) and be methodical about diagnostics and recovery. Use tools like malware scanners, security plugins, the trusty old backup, and don’t forget to communicate. The webdev department and SEO department will need to speak the same language so that you can have a two-pronged approach in early detection and fast recovery. Sometimes, webdevs won’t know what to do and how to quickly resolve issues if SEO folks don’t pitch in with creative solutions. And, sometimes SEOs that are techies at heart will be able to discover these infections relatively quickly if they do a daily round of Console clicking-around.

When all is said and done, I think that for businesses whose website presence is mission-critical like it is in ecommerce businesses, having a team of webdev and SEO experts is invaluable. If you can find such a team who will be crazy-dedicated to jump on board even on a Sunday morning, then you’ve got yourself a keeper, and you better do everything you can to keep them. It’s really hard to find a reliable team to support you as you develop your business. It’s far easier to find someone who’s in pain and needs a solution. Webdev/SEO teams aren’t all the same, and this is why you want to choose wisely.

The same way you pick your barber shop, or your car mechanic, or your general practitioner, or your lawyer, in the same way, you ought to pick a skilled, reliable, approachable, and dedicated team of web developers and SEO experts. Otherwise, you may wake up one Sunday morning, with a website that loses its footing in the niche you want, but suddenly gets an influx of overseas traffic for completely unrelated search terms.

Need a reliable web development and SEO team? Lets chat. Maybe we’d be a good fit. If nothing else, at least you can get some useful insights in the industry and how to make a smart choice.